CodeScene ACE

Fix complex code with AI

Imagine a world where code improves as you work on it. While others focus on generating code, CodeScene ACE helps you maintain and improve what already exists.

Empowering the world’s top engineering teams

Seamless IDE integration

IDEs supported

CodeScene's IDE extension integrates with popular IDEs used by development teams today, with more options on the way.

Fix technical debt that you don't have time for

Talk to sales

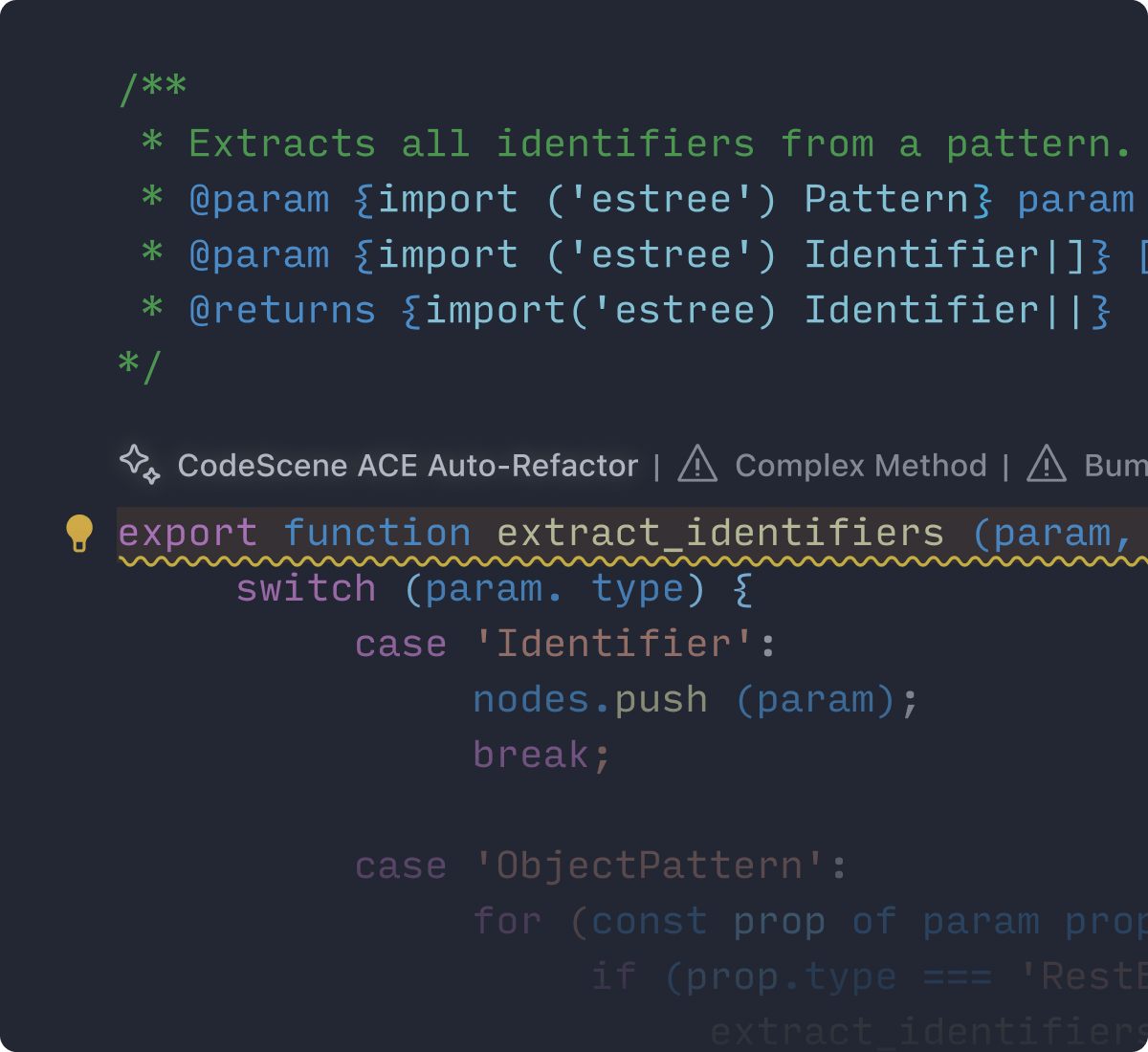

Refactor as you go, ship faster

ACE proposes better ways to structure your code. On the fly. Write clean, maintainable code without breaking your flow. This is the most cost effective time to refactor as the logic is fresh in your mind. It’s also the right time – no more drowning in technical debt.

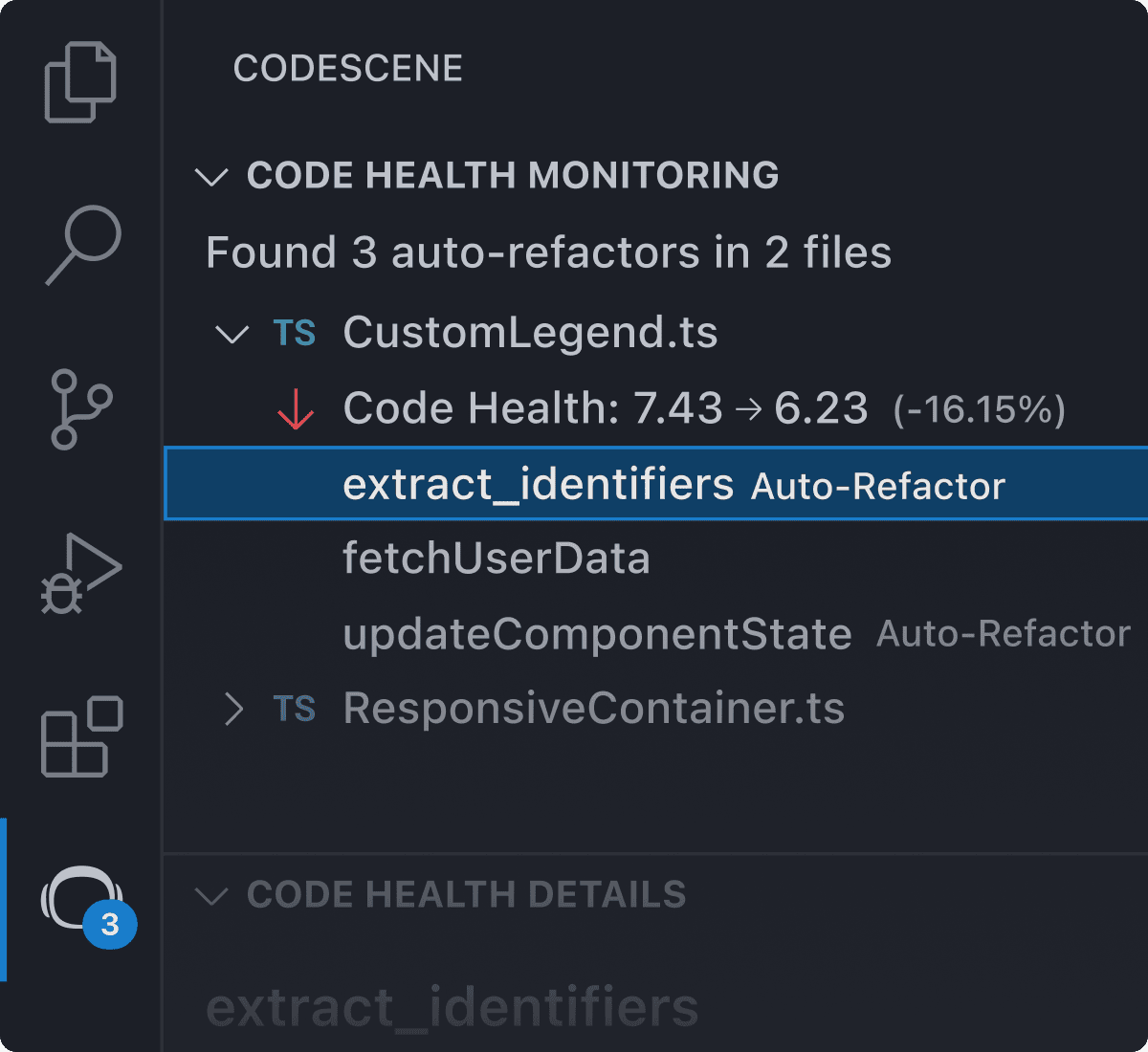

Automatic quality gates

Quality check and fix AI code

Get real-time code health monitoring for your AI coding assistants. When a code smell appears, our AI-powered add-on safely refactors issues without major rewrites. Only healthy code is pushed to production.

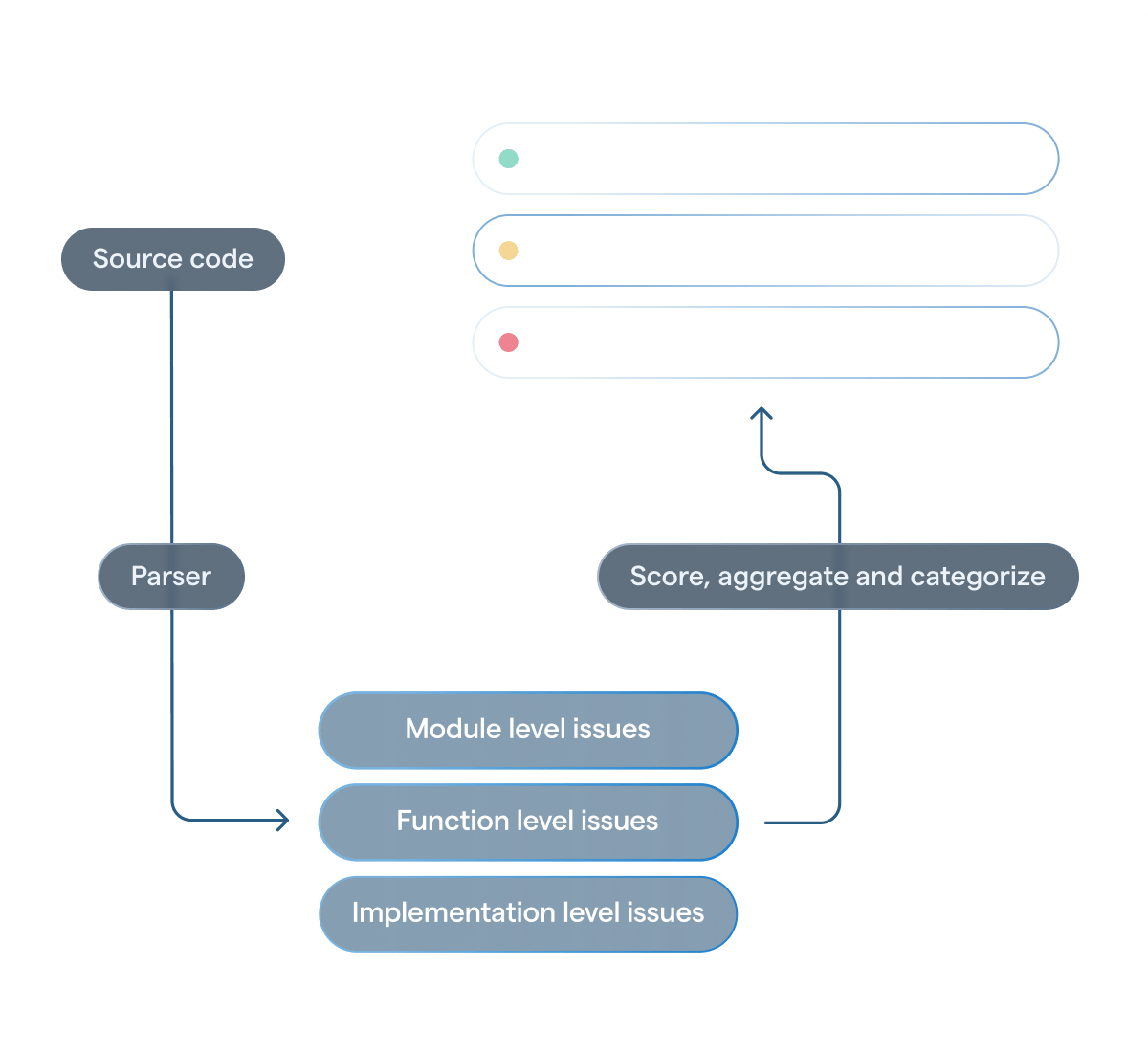

Industry's leading Code Health KPI

Metrics you can trust

Code quality is often subjective, but ACE relies on the proven Code Health Metric to offer clear, research-based recommendations. This means cleaner code, shorter development cycles and fewer bugs.

Fact-based metrics, real-time improvements

Fact-checked refactorings

ACE’s AI-refactorings are fact-checked: LLMs are just one part of a delivery chain that validates all suggestions for accuracy to ensure that they really lead to a measurable reduction in technical debt. Your code stays functional — just better.

Save time on complex code

The future of AI-powered coding is here

Software maintenance accounts for over 90% of a product's lifecycle costs, with developers spending 70% of their time understanding existing code. That's why we've designed a solution that simplifies your work, helping you focus on what truly matters.

Let ACE do the heavy lifting

Refactoring shouldn’t be a burden. ACE does the heavy lifting of improving your existing code, freeing you up for the creative side of development. With minimal, targeted refactorings, ACE simplifies your tasks without massive rewrites, so you can confirm, review, and apply changes quickly.

Quantifiable gains, measurable ROI

ACE delivers more than cleaner code – it drives ROI. Show your team and stakeholders the value of addressing technical debt: shorter lead times, fewer defects, and faster delivery without sacrificing quality. The result? Happier teams, satisfied managers, and software that evolves with ease. And it’s automated.

Data privacy and security

Your code is your company's intellectual property, and CodeScene ACE ensures it is never used as AI training data or stored. Only minimal necessary snippets are processed, and your complete codebase is never shared with AI. We also protect your code with industry-standard encryption and secure protocols. Read more.

More than an AI coding tool

Unlike Copilot, which focuses on code generation, CodeScene ACE automatically improves existing code by fixing technical debt - whether AI generated or handwritten.

Frequently asked questions

Can't find the answer here? Visit our Help Center.

Does CodeScene ACE store any of my source code?

Your code is your company's intellectual property, and CodeScene ACE ensures it is never used as AI training data or stored. Only minimal necessary snippets are processed, and your complete codebase is never shared with AI. We also protect your code with industry-standard encryption and secure protocols. Read more.

What languages does ACE support?

CodeScene ACE, our AI-refactoring add-on, works with Java, JavaScript, TypeScript, JavaScript React, TypeScript React, and C#.

What code smells are supported?

Complex Conditional, Bumpy Road Ahead

Complex Method, Deep Nested Complexity,

Large Method. Read more here.

What LLMs are you using?

We use a combination of LLMs from various vendors, selecting the most suitable ones based on the type of code smell and programming language to deliver the best results. Currently, our LLMs include models from OpenAI, Anthropic, and Google.

Is CodeScene ACE free?

No, reach out to Sales regarding pricing.

Do you train AI models based on our code?

No. Your code stays private and is never used to train AI models. We designed CodeScene ACE with strict privacy principles to protect your intellectual property. Learn more about our approach

Product updates & insights

Stay updated on CodeScene ACE

Whether you're interested in upcoming IDEs, language support, product updates, or use cases, we've got you covered. Fill out the form, and we'll send the latest insights straight to your inbox!

Auto-refactor technical debt. Automatically.

Let CodeScene ACE make refactoring easier for you. Book a technical demo to learn how.