"Am I getting satisfactory code coverage?" Over the years, this had become the question I asked myself to decide what unit tests to write. And I have to admit it was extremely satisfying to see the green bars in the CI/CD pipeline on my pull requests. But on joining CodeScene, I have been forced to re-visit my reasons for writing automated tests, coming up with more intelligent questions to guide me in my decisions.

Of course, we all know that we need automated tests. There are huge savings in failing fast, and regression tests are a must to protect us from the risks of refactoring legacy code or just simply making mistakes like the humans we are. We also know that having a wide base of automated tests in the test pyramid is desirable.

It is however becoming harder and harder to turn a blind eye to the costs of automated tests. The labor cost of writing an automated test is real, but probably constantly decreasing due to framework support. But, running a standard CI/CD pipeline, aiming for fast turnaround times, and maybe even trunk-based development, changes things. Each new automated test adds CPU cycle cost and, more importantly, a delay for developers, testers, and end users waiting for new features. Finally, tests are code that needs to be taken care of as the application evolves, adding to the maintenance costs for the application. After all, technical debt can exist in test code, not only in application code.

When being interviewed for my current job as a developer at CodeScene, my passion for automated testing was one of my selling points. I obviously got the job, but my gut feeling was that my code coverage skills were not the primary reason for my employment. Since CodeScene is a company built around deep insights in code quality, this puzzled me at first. Might there be other things that are more important than code coverage?

I have now spent a couple of months trying to figure this out, focusing on unit testing. I found two very important clues that have helped me formulate more intelligent questions about automated testing.

Do I need to keep it? Does it need to run in the pipeline?

I found one clue in Michael Feathers' blog post "Unit Conversations". A central quote from this blog post is this: When you have a REPL, you can call a function and learn how it works interactively.

My background as a Java programmer did not include a REPL experience. So I would write automated tests for my Java code base to learn how it works. This is a perfectly legitimate activity for a developer. When you write new code, it will usually become part of older code, or change older code. Even if this older code was written by yourself a week ago, it is usually a good idea to understand how it works before you decide where to incorporate your changes. And when you begin designing your changes, it is really nice to be able to take them for a quick test run while they are evolving.

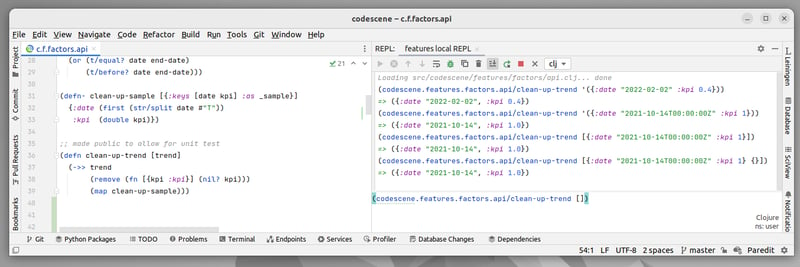

At CodeScene, Clojure is the main backend programming language. With Clojure, the REPL is a central part of the developer experience. I can now start my exploration of the code base the interactive way. Once my favorite IDE is set up correctly, the threshold for testing out a function is extremely low - just load it into the REPL and run it. I can run a function with certain parameters, check the result, change the function, re-run the same command in the REPL, and so on.

Most of these runs don't need to be saved for posterity - their only purpose is to help me understand what to do next. And if I do want to save them, maybe for tomorrow, maybe to make a colleague's life easier in the future, there is the nifty rich comment to turn to. Exploration runs can now be reused, but they do not need to run in the CI/CD pipeline or even necessarily be maintained as the code changes.

Eventually, I understand the relevant part of the code base and have an idea of how my changes should be implemented. I might also have some extra useful exploration runs that should become automated tests, in which case I just add those to the tests that already exist. But I have not bloated the set of unit tests with all my experiments, because I have kept asking myself if this experiment really needs to be a unit test.

(This train of thought also leads me to think about test-driven vs REPL-driven development, but that is best discussed with good friends over a nice crisp Pinot Gris for now...)

Is all code equal?

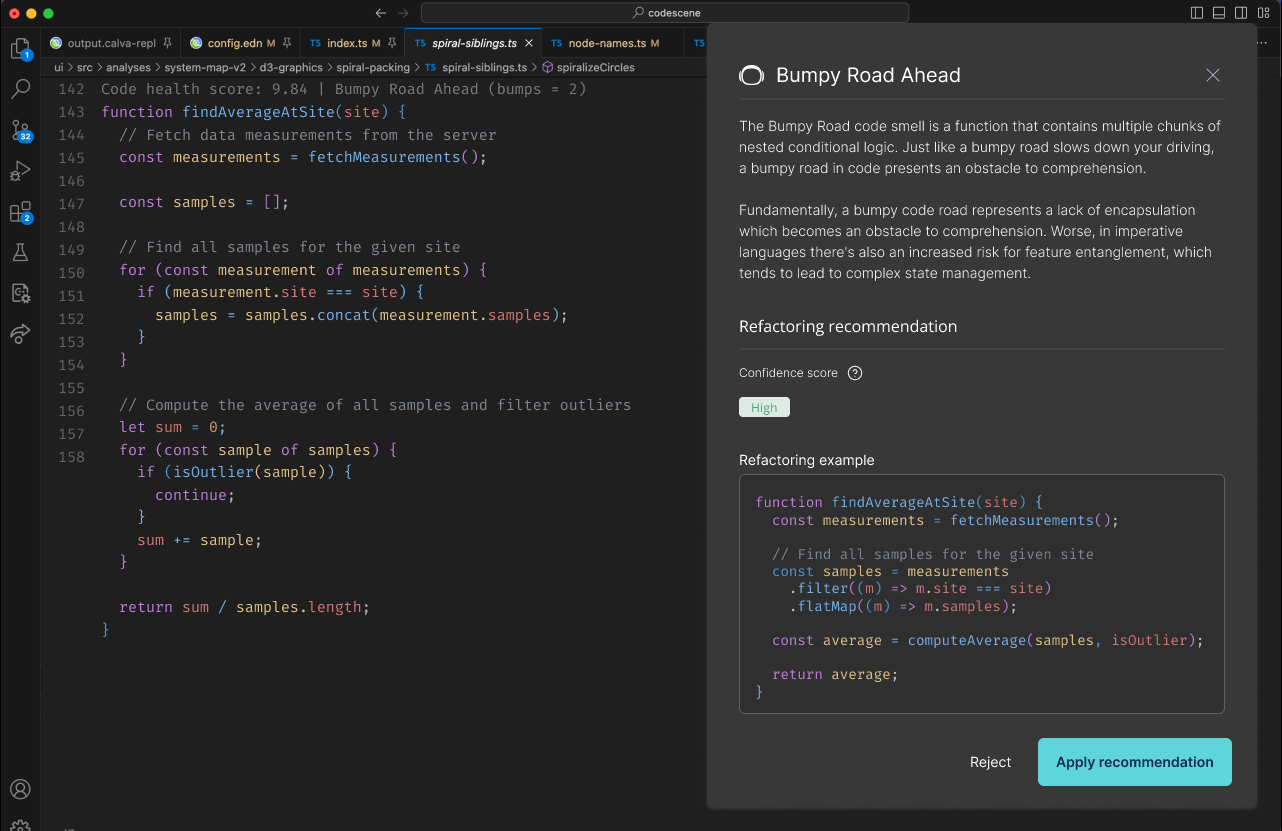

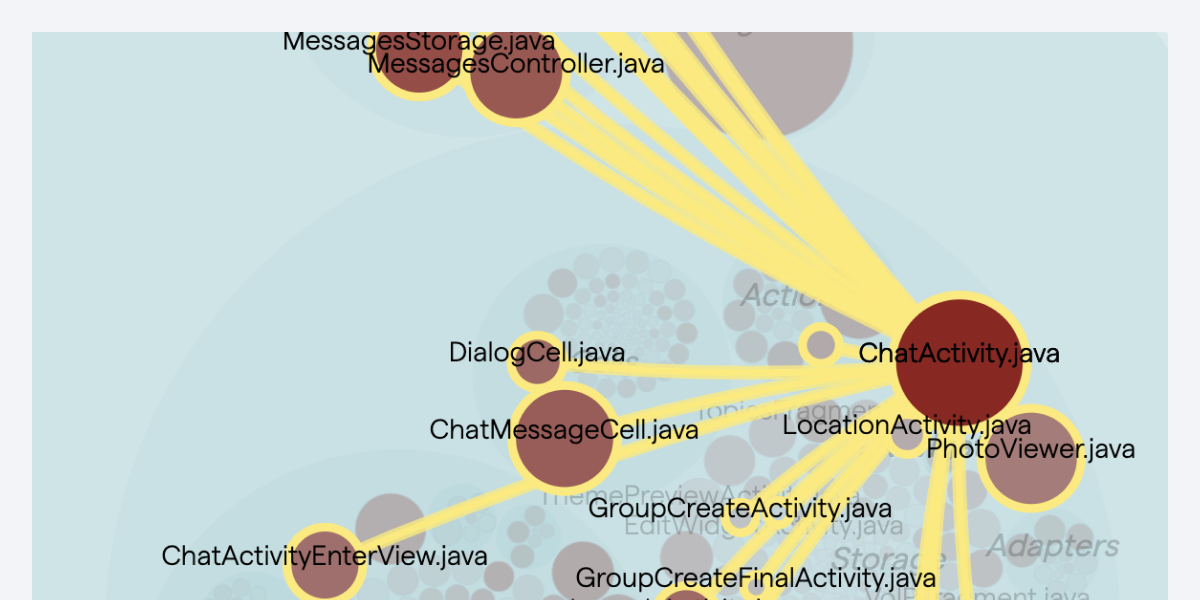

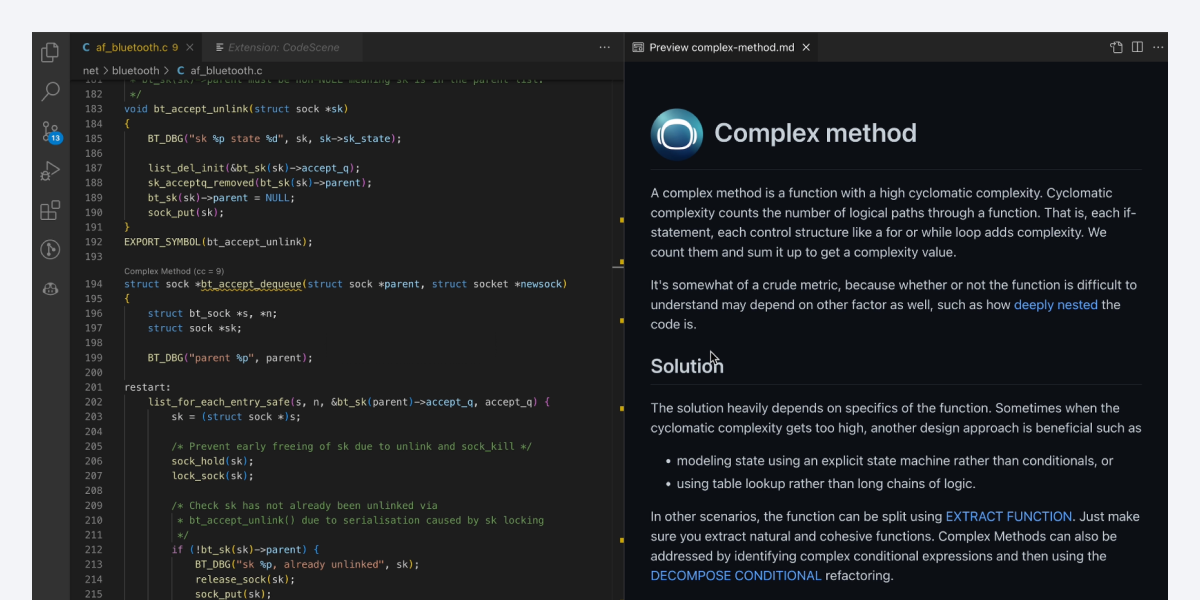

The second clue is of course extremely obvious: the CodeScene product. We eat our own dog food at CodeScene, so as a developer I get feedback on my code that is very different from code coverage. This helps me realize that not all code is equal, and therefore not in need of the same unit testing efforts.

- The pull request integration lets me know at an early stage what the risks involving my code are. Low-risk code needs less unit tests than high-risk code.

- The focus on prioritizing your improvements is central. The whole idea of being smarter about what you spend your resources on is an important key to finding the balance between costs and benefits, be it technical debt or automated testing. So if my code touches a hotspot, it is a clue to prioritize unit testing.

- Ultimately, I want to avoid deploying code that will result in bugs, or unplanned work as CodeScene phrases it. Focusing on delivery risks gives me feedback to support my decisions on where to focus unit testing.

And at the end of the day, I as a developer am a bit lazy and rather affected by the quick fixes I get from my CI/CD pipeline and team metrics. Like Pavlov's dogs, my tail starts wagging when I get a green check mark, and I try to get another one the next time. Asking myself if all code is equal is a shortcut to these fixes, and CodeScene supports me in finding the answers.

What now?

Having the opportunity to grow in my profession as a developer is great, and I feel like I have started a journey towards a deeper understanding of software testing in general and automated testing in particular. Unit tests are important, but often seen as a "personal hygiene" matter for the individual developer. Their place in the test pyramid however indicates that they do concern the entire organization. They will influence and be influenced by the other types of tests, automated as well as manual. This indicates a need for communication and coordination that I yet have to explore.

So I am sure there are plenty of intelligent questions left to ask myself - I'll go off looking for some more clues now....

Also, before I forget, here is an interesting blogpost from my colleague Simon: Refactoring components in React with custom hooks.